The Dept. of Education Really Has Only 1 AI Use-Case?!

Plus, AutomatED's first GPT and a review of our most popular pieces from 2023.

[image created with Dall-E 3 via ChatGPT Plus]

Welcome to AutomatED: the newsletter on how to teach better with tech.

Each week, I share what I have learned — and am learning — about AI and tech in the university classroom. What works, what doesn't, and why.

In this week’s piece, I present AutomatED’s new Beta custom GPT, puzzle over the U.S. Department of Education, and look back at last year’s top AutomatED content.

Table of Contents

👀 What to Keep an Eye on:

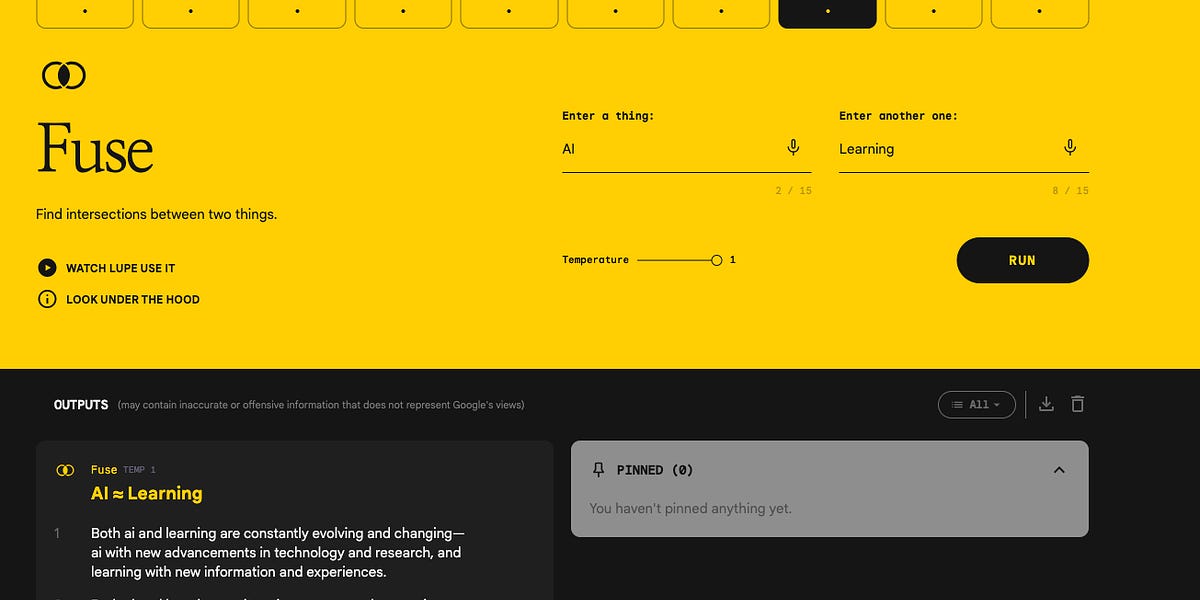

AutomatED’s GPTs

What if you could talk to a pedadogy expert to quickly learn how to better incorporate AI tools in your spring 2024 assignments? What if this expert’s advice was sensitive to your courses’ unique features, your own pedagogical preferences, your specific students, and how students in general might misuse AI?

I have good news. An expert like this is available to you right now, at least if you are an AutomatED Premium subscriber!

Earlier this week, we released our first AutomatED-built custom GPT agent to our Premium subscribers in the form of a Beta version. It is a GPT for creating university-level assignments that incorporates all of the insights of our research, experimentation, and consultations of the past year, including our guide on designing assignments and assessments in the age of AI and our guide on discouraging and preventing AI misuse. We spent more than a month perfecting its lengthy instructions and knowledge base.

So far, our Premium subscribers are loving it, just like the Alpha testers before them. Here’s a comment from one of our power users:

Not only did this tool provide great revision ideas for an assignment I had already written, it helped incorporate AI exploration into the assignment in a pedagogically strong way.

We expect to continue to improve the GPT in the coming weeks, but it is already capable of helping even the most experienced educators develop and refine their assignments. Many are reporting that it is significantly better than using a generic LLM like ChatGPT4. I am using it a lot to prepare for this coming semester, as I am teaching a new course (and working to perfect another that I have taught only once)!

If you don’t have Premium, you will soon be able to access this GPT directly via the GPT Store, which OpenAI announced yesterday will be rolled out by this coming Friday. We plan to release our GPT — and others we are working on — to the Store soon. Follow our Twitter and stay tuned to the newsletter for updates on this front.

In the meantime, Premium subscribers have exclusive access to this GPT, and they will have exclusive access to future Betas of our other GPTs before we release them to the Store.

Of course, there are other benefits of Premium, like our comprehensive guides. In fact, next week we will release a Premium guide on developing or refining your AI policy for your syllabus. It is a winter break bounty here at AutomatED!

Later this spring, we plan on releasing another Premium guide on teaching students to use AI tools, as well as a guide on how professors can improve their workflow with project management software like Asana.

If you aren’t an AutomatED Premium subscriber ($5/month, $50/year), now is the time to join, not only because you wouldn’t want to miss the above content as you prepare for the spring semester but also because you can lock in the current price for a year. See the bottom of this email for three ways to get Premium, including one that is completely free.

😯 The Surprise of the Week:

1 Dept. of Education AI Use Case?!

Take a look at this graph:

A graph from page 2 of GAO-24-105980.

These are the quantities of AI use cases reported by United States Federal government agencies in the Government Accountability Office’s December 12, 2023 report “Artificial Intelligence: Agencies Have Begun Implementation but Need to Complete Key Requirements.”

While I am not surprised to find NASA at the top of the list, I was very surprised to see that the Department of Education (ED) has only 1 AI use case that they reported to the GAO for the 2022 fiscal year (i.e., the year ending September 30, 2023). How is this possible?

Digging deeper into the full text of the GAO report, I saw that some agencies had incomplete or missing reports of AI use cases, so my initial thought was that ED might not be reporting all of their AI use cases. But then I saw that ED’s own inventory of AI use cases has only 2 use cases that they will deploy or have deployed: Aidan (a virtual assistant for the Federal Student Aid program that answers users’ financial aid questions) and IPAC (a bot that downloads spreadsheets from the Treasury’s databases, adjusts them to fit the systems of the Department of Education, and uploads them).

Yet again, I ask: that’s all?! Yikes!

You might think that I am being unfair. After all, ED’s Office of Educational Technology has engaged seriously with AI, even releasing a report on AI and the “Future of Teaching and Learning” with some excellent insights and recommendations back in May 2023 (those of you who have been subscribed since the beginning will remember that we reported on it at that time)

But consider ED’s mission:

- Strengthen the Federal commitment to assuring access to equal educational opportunity for every individual;

- Supplement and complement the efforts of states, the local school systems and other instrumentalities of the states, the private sector, public and private nonprofit educational research institutions, community-based organizations, parents, and students to improve the quality of education;

- Encourage the increased involvement of the public, parents, and students in Federal education programs;

- Promote improvements in the quality and usefulness of education through Federally supported research, evaluation, and sharing of information;

- Improve the coordination of Federal education programs;

- Improve the management of Federal education activities; and

- Increase the accountability of Federal education programs to the President, the Congress, and the public.

Given ED’s mission, you would think that there would be more potential AI use cases that they would be reporting on. In fact, you might think that ED should be incorporating AI heavily in their work.

For instance, I immediately can think of three broad kinds (and there are countless others):

Data analytics AI use cases, where ED teams use AI tools to better analyze and represent the copious data they have at their fingertips;

Research analysis AI use cases, where ED teams use analogs or implementations of BERT (like the famed SciBERT) to develop education-specific language models of the educational research in their role influencing curricular recommendations; and

Distance education pedagogy AI use cases, where ED teams analyze ways that AI tools can improve outcomes of students in distance education programs by simulating some of the benefits of in-person education (e.g., personalization).

But maybe I am mistaken or maybe I have an incomplete picture of ED’s engagement with AI. So, I have reached out to the aforementioned Office of Educational Technology to see if they have any insight into this surprising report, and I will report back as soon as I get more information.

🕸️ Top AutomatED Pieces from 2023,

by Web Views

As 2023 came to a close, I dug deep in our analytics dashboard to see which of our pieces were most popular. Below, I link 9 of our most popular pieces. First, I list the top 3 pieces by web views, then the top 3 pieces by email open rate, and finally the top 3 pieces by click rate. If you’re a new subscriber, you might want to take a look at what your colleagues found most interesting in the past 10 months of AutomatED!

✉️ Top AutomatED Pieces from 2023,

by Email Open Rate

🖱️ Top AutomatED Pieces from 2023,

by Click Rate

🔗 Links

Late in the fall of 2023, we started posting Premium pieces every two weeks, consisting of comprehensive guides, releases of exclusive AI tools like AutomatED-built GPTs, Q&As with the AutomatED team, in-depth explanations of AI use-cases, and other deep dives.

So far, we have three Premium pieces:

To get access to Premium, you can either upgrade for $5/month (or $50/year) or get one free month for every two (non-Premium) subscribers that you refer to AutomatED.

To get credit for referring subscribers to AutomatED, you need to click on the button below or copy/paste the included link in an email to them.

(They need to subscribe after clicking your link, or otherwise their subscription won’t count for you. If you cannot see the referral section immediately below, you need to subscribe first and/or log in.)